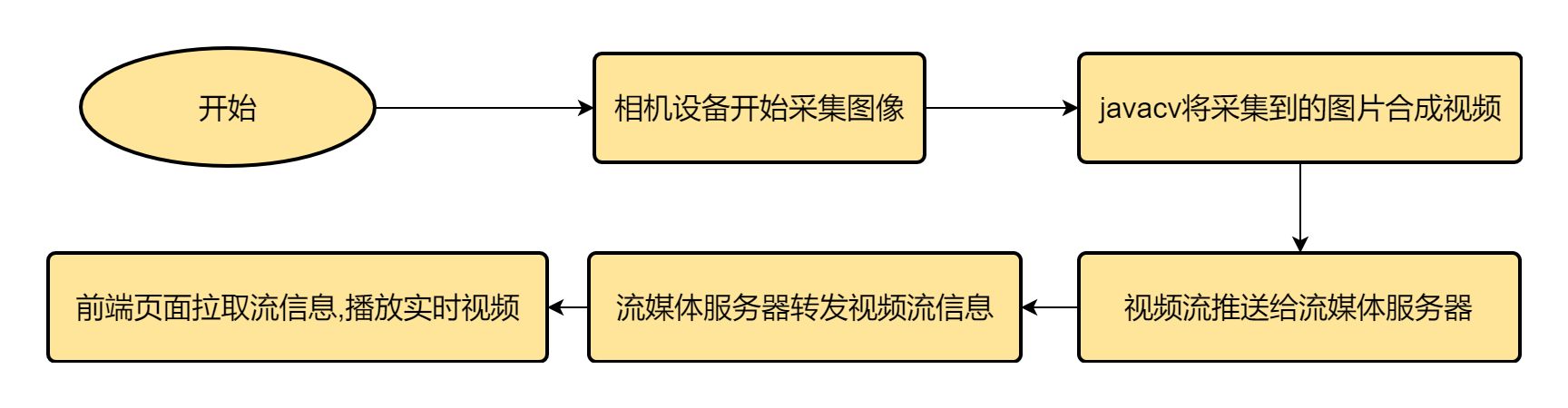

物联网环境下硬件设备采集到图像后整合成视频流向后台推送

环境准备

工业相机:采集图像

java后端程序:获取图像并给流媒体服务器推流

流媒体服务器:推拉视频流

react前端程序:展示视频

流程图如下:

java推流代码

创建图像推流处理工具类

package com.jscoe.device.handle;

import com.jscoe.commons.util.ImageUtils;

import lombok.extern.slf4j.Slf4j;

import org.bytedeco.ffmpeg.global.avcodec;

import org.bytedeco.javacv.FFmpegFrameRecorder;

import org.bytedeco.javacv.FFmpegLogCallback;

import org.bytedeco.javacv.Frame;

import org.bytedeco.javacv.Java2DFrameConverter;

import org.springframework.stereotype.Component;

import javax.annotation.PreDestroy;

import java.awt.image.BufferedImage;

import java.util.concurrent.atomic.AtomicBoolean;

/**

* 图像流处理类

* author honor

*/

@Slf4j

@Component

public class ImageStream {

/**

* 视频流参数

*/

private FFmpegFrameRecorder recorder;

private Java2DFrameConverter converter = null;

//推送视频的帧频

private Integer FRAME_RATE = 12;

private Integer GOP_SIZE = 1;

private String FORMAT_RTSP = "rtsp";

private String FORMAT_RTMP = "rtmp";

private final AtomicBoolean startFlag = new AtomicBoolean(false);

public void initRtspRecorder(String pushUrl, int width, int height) {

try {

FFmpegLogCallback.set();

converter = new Java2DFrameConverter();

recorder = new FFmpegFrameRecorder(url, width, height);

recorder.setVideoCodec(avcodec.AV_CODEC_ID_H264);

recorder.setGopSize(GOP_SIZE);

recorder.setFormat(FORMAT_RTSP);

recorder.setFrameRate(FRAME_RATE);

recorder.setOption("rtsp_transport", "tcp"); // 设置 RTSP 传输协议 tcp/udp

recorder.start();

startFlag.set(true);

log.info("recorder init ok");

} catch (Exception e) {

log.warn("recorder init fail: ", e);

destroy();

}

}

/**

* rtmp推流

*/

public void initRtmpRecorder(String pushUrl, int width, int height) {

try {

FFmpegLogCallback.set();

converter = new Java2DFrameConverter();

recorder = new FFmpegFrameRecorder(url, width, height);

recorder.setVideoCodec(avcodec.AV_CODEC_ID_H264);

recorder.setGopSize(GOP_SIZE);

// recorder.setAudioCodec(avcodec.AV_CODEC_ID_AAC);

// recorder.setFormat("flv");

recorder.setFrameRate(FRAME_RATE);

recorder.start();

startFlag.set(true);

log.info("recorder init ok");

} catch (Exception e) {

log.warn("recorder init fail: ", e);

destroy();

}

}

public void pushStream(String pushUrl, String deviceNo, int width, int height, BufferedImage bufferedImage) {

if (!startFlag.get()) {

initRtspRecorder(pushUrl, width, height);

}

try {

//压缩图片

BufferedImage bufferedImageCompressed = ImageUtils.compress(bufferedImage);

//给视频增加时间窗

// Date date = new Date();

// SimpleDateFormat dateFormat = new SimpleDateFormat("yyyy-MM-dd HH:mm:ss");

// String formattedDate = dateFormat.format(date);

// BufferedImage bufferedImageWithWaterMark = ImageUtils.addTextWaterMark(bufferedImageCompressed, Color.RED, 80, formattedDate);

Java2DFrameConverter converter = new Java2DFrameConverter();//5

Frame frame = converter.getFrame(bufferedImageCompressed);

recorder.record(frame);

} catch (Exception e) {

log.warn("recorder record fail: ", e);

}

}

@PreDestroy

public void destroy() {

if (startFlag.get()) {

try {

recorder.close();

converter.close();

startFlag.set(false);

recorder = null;

log.info("recorder destroy ok");

} catch (Exception e) {

log.warn("recorder destroy fail: ", e);

}

}

}

}循环中调用pushStream()方法即可.

代码中的pushUrl是推流的地址,这个根据你使用的流媒体服务器来定,比如我使用的ZLMediaKit流媒体服务器,在使用rtsp协议推送流时, 它的4元组分别为

rtsp(RtspMediaSource固定为rtsp)、somedomain.com、live、0那么播放这个流媒体源的url对应为:rtsp://somedomain.com/live/0rtsps://somedomain.com/live/0rtsp://127.0.0.1/live/0?vhost=somedomain.comrtsps://127.0.0.1/live/0?vhost=somedomain.com

例如: rtsp://192.168.10.98:554/basler/23448102

代码中的bufferedImage参数就是我们要推送的每一帧图像,需要你通过自己的硬件设备去获取.

推送时注意推送视频的帧频最好不要小于10帧,否则视频延迟较为严重;同时推送的方法pushStream()的执行频率最好和帧频保持一致,否则视频也会有延迟较高的情况

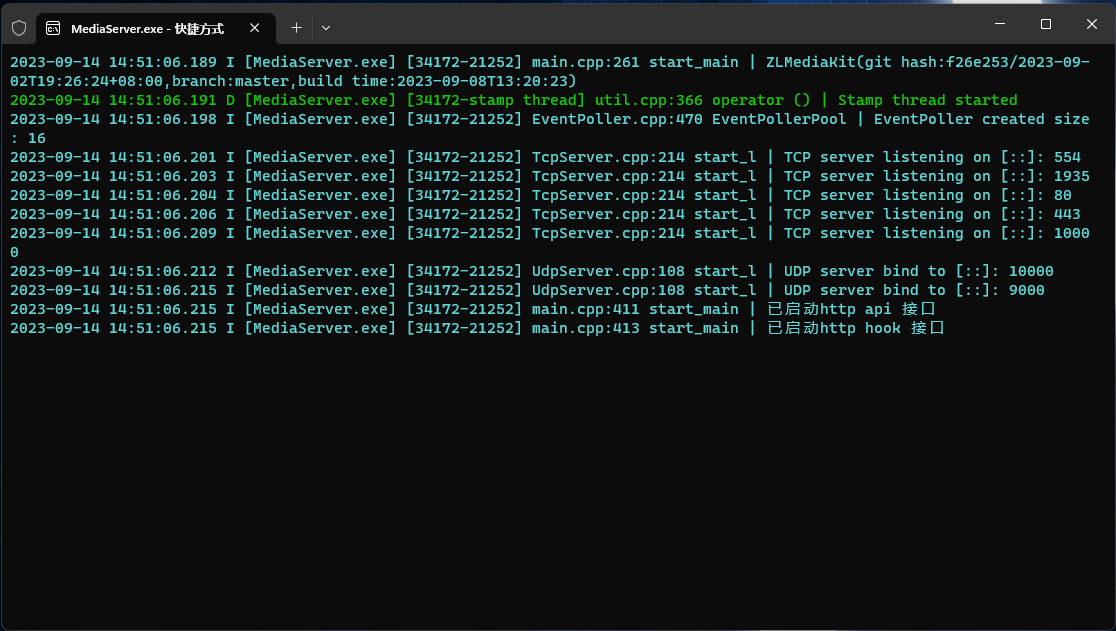

流媒体服务器的部署

本项目选用的ZLMediaKit流媒体服务器,优势是:开源,文档丰富,提供丰富的restful接口和hook接口,便于部署等.

ZLMediaKit的部署方式建议本地编译部署,不建议使用docker,docker部署后不支持udp的传输协议,且视频延迟较高.

ZLMediaKit完整的部署方案在github上,下面介绍windows下简单的部署方案:

ZLMediaKit是使用c++语言开发的,要想编译ZLMediaKit必须要有 visual stuido >= 2015 或者clion也可以

使用clion的话要把编译器设置为visual stuido的MSVC而不是mingw,设置方法如下:File->setting打开设置页面

拉取项目:

#国内用户推荐从同步镜像网站gitee下载 git clone --depth 1 https://gitee.com/xia-chu/ZLMediaKit cd ZLMediaKit #千万不要忘记执行这句命令 git submodule update --init然后使用开发工具打开项目编译即可,编译方式选择release,过程中可能会有 openssl 等找不到的警告,不需要害怕,这些都是可选依赖,不是必须安装

运行

1 进入ZLMediaKit/release/windows/Debug目录 2 双击MediaServer启动 3 你也可以在cmd或powershell中启动,通过MediaServer -h了解启动参数启动成功效果图如下

可以看到流媒体服务器正在监听554端口,即我们上文中提到的rtsp地址中的端口号

react接收流并展示

首先,我们要知道,ZLMediaKit在转发视频流的时候支持同时转发多种格式的视频流,比如我后端程序推流的地址设定为:rtsp://192.168.10.98:554/basler/23448102,react接流的时候不非得是rtsp去接,也可以接收到flv格式的视频流,地址为:http://192.168.10.98/basler/23448102.live.flv.这些都是可以去通过config.ini配置文件去配置的,具体的url规则见ZLMediaKit播放url规则.

当我把后端推流代码和流媒体服务器启动后就可以使用react接流了,代码如下:

组件:

import React, {useEffect, useRef} from "react";

import flvjs from "flv.js";

interface FlvPlayerProps {

className?: string | undefined;

style?: React.CSSProperties;

url: string;

type?: 'flv' | 'mp4';

isLive?: boolean;

controls?: boolean | undefined;

autoPlay?: boolean | undefined;

muted?: boolean | undefined;

height?: number | string | undefined;

width?: number | string | undefined;

videoProps?: React.DetailedHTMLProps<

React.VideoHTMLAttributes<HTMLVideoElement>,

HTMLVideoElement

>;

flvMediaSourceOptions?: flvjs.MediaDataSource;

flvConfig?: flvjs.Config;

onError?: (err: any) => void;

}

const FlvPlayer: React.FC<FlvPlayerProps> = (props) => {

const {

className,

style,

url,

type = 'flv',

isLive,

controls,

autoPlay,

muted,

height,

width,

videoProps,

flvMediaSourceOptions,

flvConfig,

onError,

} = props;

const videoRef = useRef<HTMLVideoElement>(null);

const flvPlayerRef = useRef<flvjs.Player | null>(null);

useEffect(() => {

if (flvjs.isSupported() && videoRef.current) {

const flvPlayer = flvjs.createPlayer({

type: type,

url: url,

isLive: isLive,

...flvMediaSourceOptions,

}, {

...flvConfig,

});

flvPlayer.attachMediaElement(videoRef.current);

flvPlayer.load();

const playPromise = flvPlayer.play();

flvPlayerRef.current = flvPlayer;

if (playPromise !== undefined) {

playPromise.catch(() => {

});

}

flvPlayer.on(flvjs.Events.ERROR, (err) => {

if (onError) {

onError(err);

}

});

} else {

console.error('flv.js is not support');

}

return () => {

if (flvPlayerRef.current) {

flvPlayerRef.current.pause();

flvPlayerRef.current.unload();

flvPlayerRef.current.detachMediaElement();

flvPlayerRef.current.destroy();

}

};

}, [url]);

return <>

<video

ref={videoRef}

className={className}

style={style}

controls={controls}

autoPlay={autoPlay}

muted={muted}

height={height}

width={width}

{...videoProps}

/>

</>

}

export default FlvPlayer;使用:

import React from 'react';

import FlvPlayer from "./component/flv-player";

function App() {

return (

<div>

<FlvPlayer url={'`http://192.168.10.98/basler/23448102.live.flv`'}

type={`flv`}

isLive={true}

muted={true}

controls={true}

autoPlay={true}

/>

</div>

);

}

export default App;react使用引用文章:https://ithere.net/article/1701421986649493505 TimothyC